How Many Companies Are Using AI? Latest Stats (June 2026)

AI adoption is now widespread, but real business value depends on clear outcomes, strong data infrastructure, and measurable ROI, not just deploying new tools.

AI enablement is the strategic process of building the infrastructure, processes, and governance systems enterprises need to move AI from isolated experiments to scalable, production-grade capabilities that drive measurable business outcomes across every function.

Most enterprises experiment with AI, but few ever scale it. The gap between running an AI pilot and deploying AI as a reliable, enterprise-wide capability is where the vast majority of organizations stall, waste budgets, and lose competitive ground.

According to RAND Corporation research, over 80% of AI projects fail to deliver their intended business value. MIT's Project NANDA confirmed this further, finding that only about 5% of generative AI pilot programs achieve measurable revenue acceleration.

The problem is not the technology. The models work. The infrastructure scales. What fails is the organizational machinery surrounding AI: disconnected data, unclear governance, absent strategy, and the widespread assumption that buying an AI tool is the same thing as becoming an AI-powered enterprise.

This is precisely the problem that AI enablement solves. AI enablement is not a single tool purchase or a one-time training initiative. It is a comprehensive system that unifies infrastructure, process, and strategy to move AI from fragmented experimentation into scalable, governed, production-grade operations. This AI enablement guide walks you through everything you need to understand, plan, and execute a successful enterprise AI enablement strategy, from foundational concepts to maturity models, frameworks, real-world use cases, and the tools required to make it work.

AI enablement is the process of equipping an enterprise with the infrastructure, data systems, governance frameworks, and organizational processes needed to deploy, integrate, and scale artificial intelligence across business functions. It goes beyond adopting AI tools. Where AI adoption asks "are we using AI?", AI enablement asks "can we use AI reliably, repeatedly, and at scale?"

Think of it this way: AI adoption is purchasing a vehicle. AI enablement is building the roads, fuel stations, and driver training that make transportation work at a national level.

At its core, AI enablement functions as a system with three interdependent pillars. The first is infrastructure: the data platforms, compute resources, APIs, and integration layers that give AI models access to the information and environments they need. The second is process: the workflows, operating models, and MLOps practices that govern how AI is built, validated, deployed, and maintained. The third is strategy: the alignment between AI initiatives and business objectives that ensures every model, agent, or automation is connected to a measurable outcome.

When these three pillars work together, AI stops being a series of disconnected experiments and starts behaving like a core enterprise capability.

These terms are often used interchangeably, but they represent distinct stages and mindsets in an organization's AI journey.

Dimension | AI Adoption | AI Enablement | AI Transformation |

Focus | Using AI tools in specific tasks | Building systems to scale AI across the enterprise | Reinventing the business model through AI |

Scope | Individual teams or use cases | Cross-functional infrastructure and governance | Enterprise-wide strategic reinvention |

Outcome | Task-level efficiency gains | Repeatable, scalable AI deployment capability | AI-native operations and competitive advantage |

Typical Stage | Early experimentation | Foundation-building and operationalization | Mature, AI-first enterprise |

Risk Without It | Shadow AI and fragmented tools | Pilot-to-production failure at 80%+ rates | Strategic stagnation despite AI investment |

Key Question | "Are we using AI?" | "Can we scale AI reliably?" | "Is AI reshaping how we compete?" |

While comprehensive AI enablement involves many moving parts, the foundational process can be distilled into three essential steps that every enterprise must get right before scaling AI, often starting with an initial AI readiness assessment to evaluate data, systems, and governance maturity.

AI is only as effective as the data it consumes. Data preparation involves cleaning, structuring, enriching, and standardizing enterprise data so it is ready for AI consumption. This includes establishing data quality standards, implementing metadata tagging, removing redundant and obsolete information, and creating consistent taxonomies across business systems.

Enterprise data typically lives across dozens of siloed applications, from CRMs and ERPs to document management systems and cloud storage. Seamless connectivity means building the integration layer, through APIs, graph connectors, and middleware, that allows AI systems to access and synthesize information from across the entire enterprise. Without this connectivity, AI operates on partial knowledge, producing incomplete or inaccurate outputs that erode trust and adoption.

Not all data should be accessible to all AI models or all users. Secure exposure involves implementing role-based access controls, data governance policies, and compliance frameworks that determine what information AI can access, who can see AI-generated outputs, and how sensitive data is protected throughout the pipeline. This step ensures that AI enablement does not introduce new risks around privacy, regulatory compliance, or data breaches.

Use Folio3 AIR to assess your infrastructure, data maturity, and AI adoption capabilities.

Check AI ReadinessThe statistics around AI project failure represent real budget losses, competitive setbacks, and organizational fatigue that make each subsequent AI initiative harder to fund and execute. Here are the specific reasons why enterprise AI enablement has become non-negotiable.

Most organizations begin their AI journey with a pilot. A team finds a use case, cleans up some data, gets a model working, and the results look promising. The problem surfaces when the organization tries to replicate that success. The second and third use cases take longer, require different data, and introduce new integration challenges. Without an enablement layer, each pilot becomes a bespoke project that cannot benefit from or contribute to a shared foundation.

This pattern is something we see repeatedly at Folio3 AI. Abdul Sami, Head of AI Development with over 20 years of experience leading ML, deep learning, and LLM projects, puts it directly: "The most common mistake we see is enterprises treating AI as a plug-and-play technology. They buy a tool, hand it to a team, and expect transformation. But the organizations that succeed are the ones that invest in the foundation first: clean data pipelines, clear governance, and a strategy that ties every AI initiative back to a business outcome. Enablement is what separates a successful deployment from an expensive experiment."

When customer data lives in the CRM, operational data sits in the ERP, and financial data resides in a separate accounting platform, AI models can only see fragments of the full picture. These data silos limit the quality, accuracy, and relevance of AI outputs. An AI-powered demand forecasting model, for example, cannot deliver accurate predictions if it lacks access to real-time supply chain data sitting in a separate system. Data silos are not just a technical inconvenience; they are the structural bottleneck that prevents AI from generating enterprise-wide value.

AI systems without clear accountability, explainability standards, or override mechanisms create significant risk: regulatory compliance issues (particularly in financial services and healthcare), reputational risks from biased outputs, and operational risks when recommendations are acted on without validation. McKinsey's Global AI Survey 2026 found that 77% of AI project failures were organizational, not technical.

Many enterprises adopt AI in isolation: one team uses a chatbot, another experiments with document extraction, and a third deploys a recommendation engine. Without a shared platform or operating model, these tools create redundant infrastructure, inconsistent outputs, and mounting technical debt.

The "garbage in, garbage out" principle applies with force in AI. Incomplete records, inconsistent formats, duplicate entries, and outdated information directly undermine model performance. Sixty-three percent of organizations either lack or are unsure whether they have the right data management practices for AI.

When enterprises approach AI without defined objectives, success metrics, or governance, they drift into unfocused experimentation. Projects are funded based on hype rather than alignment. Many organizations turn to enterprise ai strategy consultants to bring structure, ensuring every initiative ties back to measurable business outcomes from the start.

Many enterprises run on legacy technology that was never designed for AI workloads. Integrating ML models and real-time pipelines into older systems requires careful architectural planning, middleware development, and sometimes significant modernization.

Building AI capabilities that scale requires a structured, repeatable approach. The following AI adoption framework provides a step-by-step model that enterprises can adapt to their specific context, industry, and maturity level.

Start with business outcomes, not technology. Identify the specific operational metrics, revenue targets, or customer experience improvements that AI should impact. According to research tracking successful AI deployments, projects with clear pre-approval success metrics achieve 54% success rates compared to just 12% for those without defined metrics. Map high-impact use cases to business KPIs and secure sustained executive sponsorship before moving forward.

Conduct a thorough audit of your enterprise data landscape. Evaluate data quality, accessibility, governance maturity, and infrastructure capacity. This assessment should cover data storage and management systems, compute resources, integration capabilities, security posture, and existing analytics infrastructure. The goal is to identify gaps between your current state and what AI workloads require.

Define the organizational structure, roles, and processes that will govern AI development and deployment. This includes establishing an AI Center of Excellence or similar coordination function, defining decision rights for AI projects, creating standard workflows for model development and validation, and determining how cross-functional teams will collaborate on AI initiatives. The operating model should clarify who builds, who validates, who deploys, and who monitors AI systems.

With strategy, data, and operating model in place, begin developing models for prioritized use cases. Select appropriate algorithms, train on prepared datasets, conduct rigorous testing, and ensure compliance with ethical and regulatory requirements. Start with focused use cases that demonstrate value quickly while building organizational confidence.

Move validated models into production. Establish CI/CD pipelines for deployment, integrate AI outputs into business workflows, implement monitoring systems, and build the APIs that connect AI with enterprise applications. Successful deployment is an ongoing process of integration and refinement.

AI models in production require continuous monitoring for performance degradation, data drift, and changing business conditions. Establish feedback loops that capture model performance data, user feedback, and business impact metrics. Use this information to retrain models, optimize pipelines, and identify new use cases. This iterative cycle is what transforms AI from a static deployment into a continuously improving enterprise capability.

Understanding where your organization stands on the AI maturity spectrum is essential for planning your AI transformation roadmap. The following five-stage model, informed by frameworks from MIT CISR, Gartner, and MITRE, provides a practical assessment tool.

Organizations at this stage are running isolated AI pilots, typically driven by individual teams or technology enthusiasts. There is no enterprise-wide strategy, data governance is minimal, and AI activities are funded as one-off projects. MIT CISR research found that 28% of enterprises occupy this stage, and their financial performance trends below the industry average.

At this stage, the organization has recognized the need for shared infrastructure and begins investing in data platforms, governance frameworks, and cross-functional coordination. Early enablement activities include defining AI success metrics, piloting standardized data pipelines, and establishing initial governance policies. The hardest part of this stage, as MIT researchers noted, is the cultural shift from command-and-control to a more collaborative, decentralized decision-making model.

AI moves from experiments to repeatable, production-grade workflows. The organization has established MLOps practices, standardized model development processes, and integrated AI into core business systems. AI governance frameworks are active and enforced. Cross-functional teams collaborate on AI initiatives with clear roles and responsibilities. Organizations at this stage begin to see measurable returns on their AI investments.

AI is embedded across multiple business functions at enterprise scale. The organization has a mature platform, comprehensive governance, and can rapidly develop and deploy new use cases. AI is no longer a project; it is a capability teams extend with confidence. Financial performance is consistently above industry average.

The business model, operations, and competitive strategy are fundamentally shaped by AI. Proprietary AI capabilities create competitive advantages, and AI-driven decision-making extends from operations to strategic planning. Reaching this stage requires deep organizational transformation, not just technical maturity.

Get a free consultation to assess your data readiness, infrastructure, and AI strategy gaps.

Get Free ConsultationAI enablement looks different depending on the industry, but the underlying patterns of success are remarkably consistent. Here are examples of how AI enablement drives results across key sectors.

Healthcare organizations face some of the most complex data challenges. Patient data is fragmented across EHRs, imaging systems, lab databases, and billing platforms. Successful AI enablement in healthcare requires unifying these sources under strict HIPAA-compliant governance before deploying clinical applications.

Financial institutions have one of the highest AI failure rates at over 82%, largely due to regulatory complexity and bias concerns. AI enablement here focuses on building governance that satisfies regulators, establishing model validation and explainability, and creating audit trails for AI-driven decisions.

Retail AI enables the unification of customer data across online and offline channels to create a comprehensive view of customer behavior. This requires integrating POS systems, e-commerce platforms, loyalty programs, and inventory management.

In manufacturing, AI enablement involves bridging the gap between operational technology (OT) on the factory floor and information technology (IT) in the enterprise. This OT/IT integration requires specialized connectors, edge computing, and real-time data streaming.

Understanding the challenges is the first step toward overcoming them. Here are the most significant barriers enterprises face when trying to scale AI from pilot to production.

Data locked in departmental silos prevents AI from accessing comprehensive datasets. Poor data quality, including missing values, inconsistent formats, and outdated records, directly degrades model performance. Sixty-three percent of organizations either lack or are unsure whether they have the right data management practices for AI.

Enterprises with decades-old ERP systems and mainframe applications face significant integration challenges. These systems often lack modern APIs, use proprietary formats, and cannot support real-time data access. Solutions range from API wrappers and middleware to phased legacy replacement.

Demand for data scientists, ML engineers, and MLOps specialists outpaces supply. Half of low-maturity organizations cite a lack of technical readiness and talent for complex deployments. Addressing this requires hiring, upskilling, partnerships, and an ai workforce development program that pairs low-code platforms with structured internal training to democratize AI development across teams.

Organizations need frameworks addressing model explainability, algorithmic bias, data privacy, and regulatory compliance. Without them, AI deployments create legal, reputational, and operational risks that can exceed the value they generate.

Muhammad Nasir, Senior Project Manager at Folio3 with over 15 years of experience managing complex AI/ML and computer vision projects for enterprise clients, has seen this challenge firsthand across industries like insurance, agriculture, and livestock management.

"When I look at the AI projects that delivered real ROI versus those that didn't," he says, "the difference almost never comes down to the model or the algorithm. It comes down to project readiness: did the client have their data organized, did they have executive buy-in, and did they have a clear picture of what success looks like? AI enablement is essentially the discipline of getting all of those prerequisites right before the first line of code is written."

Scaling AI in enterprise environments requires deliberate architectural and organizational decisions. Here is a practical approach to scaling AI the right way.

Rather than allowing each team to build its own AI stack, invest in a centralized platform providing shared compute resources, model repositories, data access layers, and deployment pipelines. This reduces redundancy, ensures consistency, and accelerates time-to-value.

Create enterprise-wide standards for data formats, quality metrics, and processing pipelines. Standardization enables reusability: data prepared for one use case can be leveraged for others, reducing time and cost for new deployments.

MLOps brings DevOps principles to AI: automated model training and testing, version control for models and datasets, CI/CD pipelines for deployment, and automated monitoring. These practices transform AI from artisanal model building into a scalable engineering discipline.

Implement governance covering the entire AI lifecycle: model risk management, fairness assessment, data privacy controls, and clear accountability structures for AI-driven decisions.

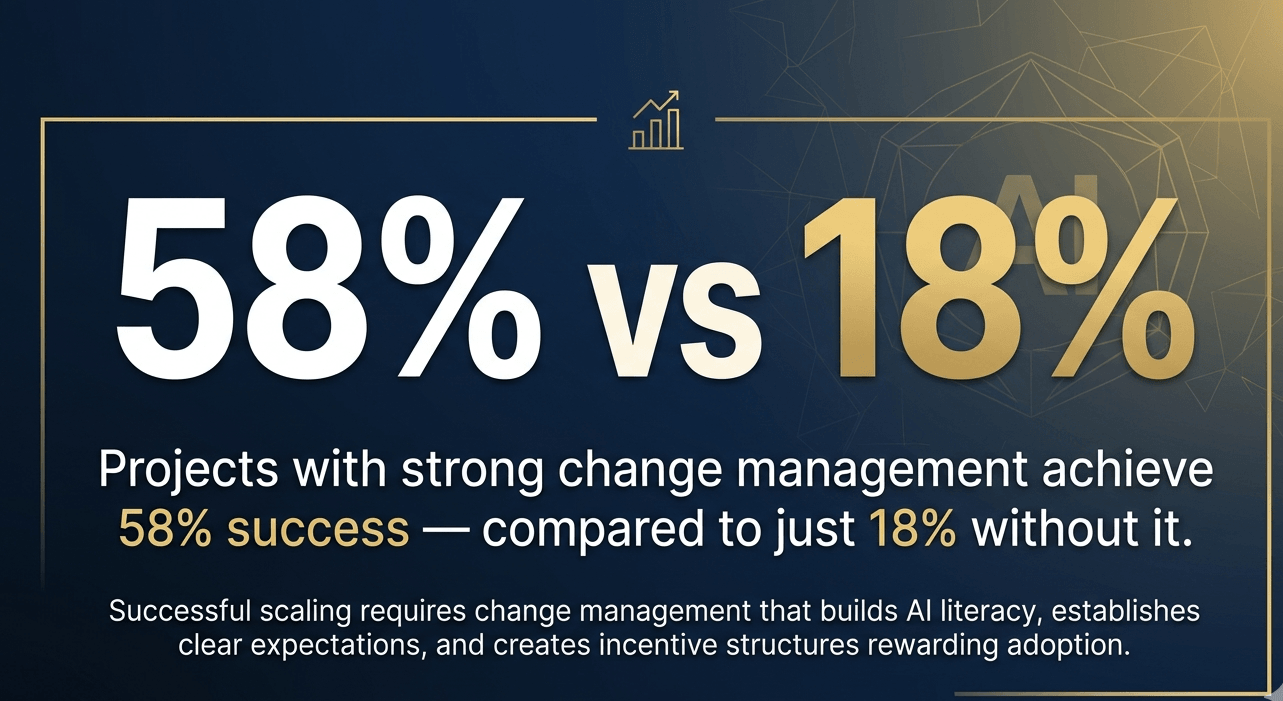

Successful scaling requires change management that builds AI literacy, establishes clear expectations, and creates incentive structures rewarding adoption. Projects with comprehensive change management achieve 58% success rates compared to 18% without it.

A comprehensive AI enablement technology stack spans several categories. Choosing the right combination depends on your industry, data maturity, and use cases, which is why many enterprises work with an AI enablement partner to architect a stack that fits their context rather than defaulting to one-size-fits-all platforms.

Cloud data warehouses (Snowflake, BigQuery, Redshift), data lake solutions (Databricks, Apache Spark), and integration tools (Fivetran, Airbyte, Informatica) form the storage and processing backbone. The platform choice matters less than the discipline of structuring data for AI: consistent schemas, quality gates, and governed access separate functional infrastructure from expensive storage.

End-to-end platforms like AWS SageMaker, Google Vertex AI, Azure Machine Learning, and Databricks ML provide managed environments for building, training, and deploying models. For enterprises with domain-specific needs, such as computer vision for quality inspection or predictive analytics for demand forecasting, custom model development layered on top of these platforms often delivers stronger ROI than generic solutions.

MLflow, Kubeflow, Weights & Biases, and Seldon Core automate the machine learning lifecycle, covering experiment tracking, pipeline orchestration, model serving, and monitoring. At Folio3 AI, our teams integrate MLOps tooling into every production deployment to ensure models do not degrade silently after launch, a common failure point for enterprises that treat deployment as the finish line.

Foundation model providers (OpenAI, Anthropic, Google) combined with orchestration frameworks like LangChain and LlamaIndex and vector databases (Pinecone, Weaviate, Qdrant) for RAG-based applications form the generative AI enablement stack. Enterprises deploying AI agents increasingly rely on multi-agent orchestration frameworks like CrewAI and LangGraph to coordinate autonomous workflows across business systems.

API management platforms (Apigee, Kong), integration solutions (MuleSoft, Boomi), and specialized AI connectors link AI models with CRMs, ERPs, and business applications. This is where many enterprises underinvest, and it is precisely the layer where Folio3 AI's integration expertise bridges the gap between a working model and a production system that drives measurable outcomes.

Explore how to create the infrastructure, governance, and processes needed for enterprise AI success.

Explore AI EnablementAn effective AI enablement strategy aligns technology investment with business outcomes. Here is a practical framework for building one.

Map your organization's highest-value processes and identify where AI can create measurable improvements. Prioritize based on business impact, data availability, technical feasibility, and organizational readiness. Focus on two to three initial use cases that demonstrate value quickly.

Every AI initiative should connect to a measurable outcome: revenue growth, cost reduction, or efficiency gain. Define success metrics before development begins, tracking both leading indicators (adoption rates, processing improvements) and lagging indicators (revenue impact, cost savings) over 90-day and 180-day horizons.

AI enablement requires collaboration across data engineering, business operations, IT, legal, and compliance. Organizations with sustained executive sponsorship achieve 68% AI project success rates compared to just 11% for those that lose sponsorship.

Build infrastructure that can grow with your AI ambitions: cloud-native architecture, modular data pipelines, containerized model deployment, and flexible compute resources that scale on demand. Avoid infrastructure decisions that lock you into specific vendors or technologies.

Do not treat governance as an afterthought. Establish data governance, model governance, and AI ethics frameworks at the beginning of your enablement journey. Early governance investment prevents the costly retrofitting that organizations face when gaps surface after AI systems are already in production.

The AI enablement landscape is evolving rapidly. Here are the trends shaping the future of enterprise AI enablement.

Generative AI is moving beyond content creation into code generation, product design, scientific research, and business process automation. Enterprise enablement strategies must account for larger compute needs, more complex governance requirements, and new evaluation challenges around output quality and accuracy.

Agentic AI, where systems independently plan, execute, and adapt multi-step workflows, represents the next frontier. Enabling agentic AI requires robust orchestration infrastructure, clear decision rights frameworks, and governance models for autonomous decision-making. Gartner predicts that over 40% of agentic AI projects will be canceled by 2027, largely because organizations attempt to deploy agentic capabilities before their enablement foundations can support them.

Low-code and no-code AI platforms are making AI development accessible to business users without deep technical expertise. This democratization expands the builder pool but increases the need for governance, quality controls, and standardized practices. Enablement strategies must balance accessibility with accountability.

As AI regulation matures globally (EU AI Act, evolving US federal guidelines, sector-specific requirements), governance frameworks must become more sophisticated, dynamic, and auditable. AI enablement strategies that embed governance from the start will have a significant advantage over those that treat compliance as a retroactive exercise.

Understand where your organization stands and identify gaps before scaling AI initiatives.

Start AI AssessmentAI enablement is the critical bridge between AI experimentation and AI transformation. Without it, enterprises remain trapped in isolated pilots that fail to scale, budgets that fail to deliver returns, and confidence that erodes with each failed initiative.

The path forward is clear. Start with strategy, not technology. Invest in data readiness before model development. Build governance from the beginning. Establish operating models with clear ownership. And treat enablement not as a project with a finish line, but as a continuously evolving capability.

The enterprises that invest in foundations before chasing the flashiest new model will transform AI from an expensive experiment into a sustainable competitive advantage.

If your organization is ready to move beyond experimentation and build AI capabilities that scale, Folio3 AI brings the strategy, engineering depth, and cross-industry experience to make it happen.

Get in touch to start your AI enablement journey.

AI enablement is the process of building the systems, processes, and governance an organization needs to use AI effectively at scale. It covers data preparation, infrastructure, strategy alignment, and ongoing optimization, ensuring AI investments produce real business outcomes rather than isolated experiments.

It creates shared infrastructure, standardized processes, and governance that allow repeatable AI deployment across functions. Without enablement, each AI project is standalone. With it, subsequent deployments build on existing foundations, reducing time-to-value and improving success rates.

Data readiness and governance, scalable infrastructure, integration layers, MLOps practices, compliance frameworks, change management, and strategic alignment with business objectives.

Research consistently identifies poor data quality, lack of business alignment, insufficient executive sponsorship, organizational resistance, and attempting to scale without foundational enablement infrastructure. Notably, 84% of failures are leadership-driven rather than technology-driven.

A structured model guiding enterprises through building scalable AI capabilities, typically including stages for strategy definition, data assessment, operating model design, model development, production deployment, and continuous optimization. It prevents organizations from skipping critical steps on the path to scaling AI.

AI adoption is now widespread, but real business value depends on clear outcomes, strong data infrastructure, and measurable ROI, not just deploying new tools.

Build a practical AI implementation roadmap for enterprises, covering readiness, use-case prioritization, governance, infrastructure, pilots, timelines, risks, and scaling steps to move from AI experiments to measurable business value.

Enterprise AI strategies succeed when they are tied to business outcomes, backed by real governance, and built to move pilots into production.