Modern enterprises are moving from single-model pilots to fleets of production AI agents that plan, act, and coordinate across systems. The question isn't whether AI agents can work in production; it's how to make them safe, accountable, and unbiased at scale. Agentic AI could unlock between $2.6 trillion and $4.4 trillion annually across enterprise use cases, yet only 1% of organizations consider their AI adoption mature.

The gap between deployment speed and governance maturity is where the real risk lives. This guide gives executives a pragmatic playbook: define what agentic AI is, understand its risk surface, and operationalize AI agent governance with observability, ownership, risk-based controls, and bias management.

Drawing on leading guidance from industry and standards bodies, we translate governance theory into deployable practices that preserve trust and business value while curbing failure modes. If your mandate is to scale AI responsibly, this is how to do it, without slowing innovation.

Understanding Production AI Agents and Their Risks

Production AI agents are autonomous software systems that set goals, make real-time decisions, and execute actions in live environments without constant human intervention. Unlike traditional chatbots or rules-based bots, they plan, call tools and APIs, and coordinate tasks end to end. This is agentic AI: goal-setting and planning combined with autonomous action and orchestration across multiple systems.

Understanding the governance shift requires a clear contrast. Traditional AI governance asks: Is the output correct, fair, and compliant? Its primary concern is output risk, whether a model's response is accurate or biased.

Agentic AI governance must also ask: what can this system do, and who is accountable when it acts? The primary concern expands to action risk; an agent doesn't wait for human review before initiating a transaction, updating a record, or triggering a downstream workflow. That distinction fundamentally changes what governance must cover.

With autonomy comes a broader risk profile. Major categories include amplification of historical bias, opaque or non-interpretable decisions, elevated access and data privacy risk, privilege escalation where agents inherit credentials beyond their intended scope, adversarial attacks such as prompt injection or memory poisoning, and cascading failures across multi-agent networks. Autonomy, speed, and interconnectedness make AI agent governance harder; the system can fail quickly and silently at scale, outpacing manual oversight. This is why robust governance is essential from day one.

In short, production AI agents expand both capability and operational risk; AI agent governance is the discipline that keeps the two in balance.

Ready to Govern Production AI Agents With Confidence?

Discover how to reduce bias, improve accountability, and build governance frameworks that scale with your AI strategy.

Talk to an Expert

Key Challenges in Managing AI Agents at Scale

Scaling from pilot to production introduces sharp governance and security challenges:

- Industry surveys show fewer than 20% of teams operate with formal AI safety policies, and under 10% perform external safety evaluations, creating blind spots for boards and regulators.

- The "identity explosion" arrives: thousands of non-human identities (agents, tools, skills) must be provisioned, tracked, and deprovisioned, often with permissions that don't match human access policies.

- Shadow AI compounds the problem. Engineering teams under pressure to ship agentic features often wire AI integrations directly into application and data layers without centralized controls for data access, model behavior, or monitoring.

- Accountability fragments as product, security, legal, and operations share partial oversight, while agent sprawl accelerates automation bias and overreliance on seemingly "smart" outcomes.

A pilot-to-production contrast clarifies the shift:

Aspect | Pilot | Scaled Production |

Observability | Basic logs, limited | Tamper-evident, real-time |

Owner Clarity | Single team, informal | Cross-functional, documented |

Access Controls | Minimal, manual | Automated, policy-driven |

Bias Monitoring | Ad hoc, manual | Continuous, automated |

Principles of Effective AI Agent Governance for Executives

Executives should anchor agent programs on a small set of durable principles: end-to-end observability and traceability (including tamper-evident logs and provenance), continuous runtime monitoring, proportional controls based on risk and autonomy, and clear assignment of decision ownership. These are consistent with industry guidance and regulatory expectations. Values matter, too: transparency, justice, non-maleficence (avoiding harm), responsibility, and privacy are central to trustworthy AI adoption.

Governance maturity doesn't happen at once — it progresses in stages. Organizations typically move through three levels:

- Informal governance — policies are ad hoc, and oversight is entirely reactive. There are no formal safety evaluations, no documented ownership, and incidents are managed on a case-by-case basis.

- Managed governance — policies exist and are documented, but enforcement is largely manual. Oversight depends on individual diligence rather than systemic controls.

- Automated governance — policy gates are embedded in CI/CD pipelines. Enforcement is real-time, audit trails are generated automatically, and governance KPIs are tracked as business metrics.

Executives should know where their organization sits on this scale. More importantly, they should treat advancement along it as a measurable business objective, not a compliance checkbox.

Principles checklist for AI agent governance

- Ensure tamper-evident logging and provenance tracking

- Implement continuous monitoring and anomaly detection

- Apply risk-proportional controls and human oversight

- Assign clear ownership and accountability for agent decisions

- Uphold transparency, fairness, and privacy standards across all deployments

- Assess governance maturity level and define a roadmap to the next stage

Strategies to Detect and Mitigate Bias in AI Agents

AI systems can amplify biases embedded in historical data and institutional processes, turning small skews into large-scale legal and reputational risk. Executives should require defense-in-depth across design-time and run-time:

- Data and outcome auditing to measure disparate impact pre- and post-deployment, with ongoing drift monitoring.

- Automated bias checks and thresholds inside production AI pipelines to stop or flag risky behavior before it reaches users.

- Regular adversarial testing and stress testing focused on edge cases, user subpopulations, and context shifts.

A practical flow:

- Collect and analyze agent training and interaction data for representativeness and sensitive attributes.

- Establish quantitative bias baselines across key outcomes.

- Set production alert thresholds and automated gating policies.

- Run simulated edge cases and counterfactual scenarios routinely.

- Commission periodic external reviews to validate methods and findings.

Building Observability and Accountability Into AI Agent Systems

Observability is the ability to trace, monitor, and explain agent behavior and actions end-to-end, not just model outputs. AI outputs can drift without triggering technical failures, making silent degradation a real risk that standard monitoring won't catch. Implement tamper-evident logs, provenance tracing, unified dashboards for multi-agent telemetry, and automated alerts for anomalies or policy violations.

An emerging best practice for organizations managing large agent fleets is the use of dedicated governance agents; AI systems specifically designed to monitor, evaluate, and flag the behavior of other agents in real time. Rather than relying solely on rule-based alerts or human review, a governance agent can detect behavioral drift in a peer agent and trigger intervention before the issue reaches end users. This approach scales oversight in ways that human-only monitoring cannot.

A focus on accountability means AI agent governance must attribute specific actions to particular agents and detect harmful or out-of-policy behaviors. The following baseline helps:

Observability Tools | Benefits | Risks If Omitted |

Tamper-evident logs | Audit trail, accountability | Undetected malicious actions |

Provenance metadata | Trace data origin and changes | Data integrity issues |

Unified dashboards | Real-time monitoring | Delayed incident response |

Automated alerts | Early anomaly detection | Escalation failures |

Governance agents | Scalable peer monitoring | Behavioral drift undetected at fleet scale |

Assigning Ownership and Defining Escalation Paths

Every AI agent should have a named owner and a documented escalation protocol. Assign "Agent Supervisors" and "AI Product Owners" responsible for risk posture, access, and outcomes, and document incident workflows that clarify who declares severity, who pauses or terminates the agent, and who communicates internally and externally. Organizations routinely struggle without this clarity, leading to paralysis in moments that demand decisive action.

High-priority playbooks should specify:

- Triggers (policy violations, anomaly thresholds, user harm)

- Containment (isolate agent, revoke tokens, disable tools)

- Remediation (rollback, patch policy, retrain, add guardrails)

- Communication (stakeholders, legal, regulators, customers)

- Post-incident review (root cause, lessons, ownership updates)

Implementing Risk-Based Controls and Human-in-the-Loop Mechanisms

Risk-based controls tailor safeguards to an agent's potential impact, access level, and degree of autonomy. Higher autonomy or sensitive permissions demand stronger measures: human-in-the-loop checkpoints, emergency shutdowns, and independent testing. Lower-risk agents can operate with lighter controls and wider automation.

One underappreciated risk in this area is skill atrophy. When agents consistently handle decisions that humans previously made, the human operators responsible for oversight gradually lose the expertise needed to meaningfully audit, override, or correct agent behavior. Governance programs should actively counter this by ensuring oversight staff remain involved in periodic decision reviews, not just incident response. A human-in-the-loop mechanism only works if the human in that loop retains genuine competence to intervene.

Example Use Case | Required Controls | Frequency of Review |

Customer support chatbot | Basic monitoring, manual override | Quarterly |

Financial transaction agent | Human-in-the-loop, kill switch | Monthly |

Autonomous supply chain AI | External audit, emergency shutdown | Weekly |

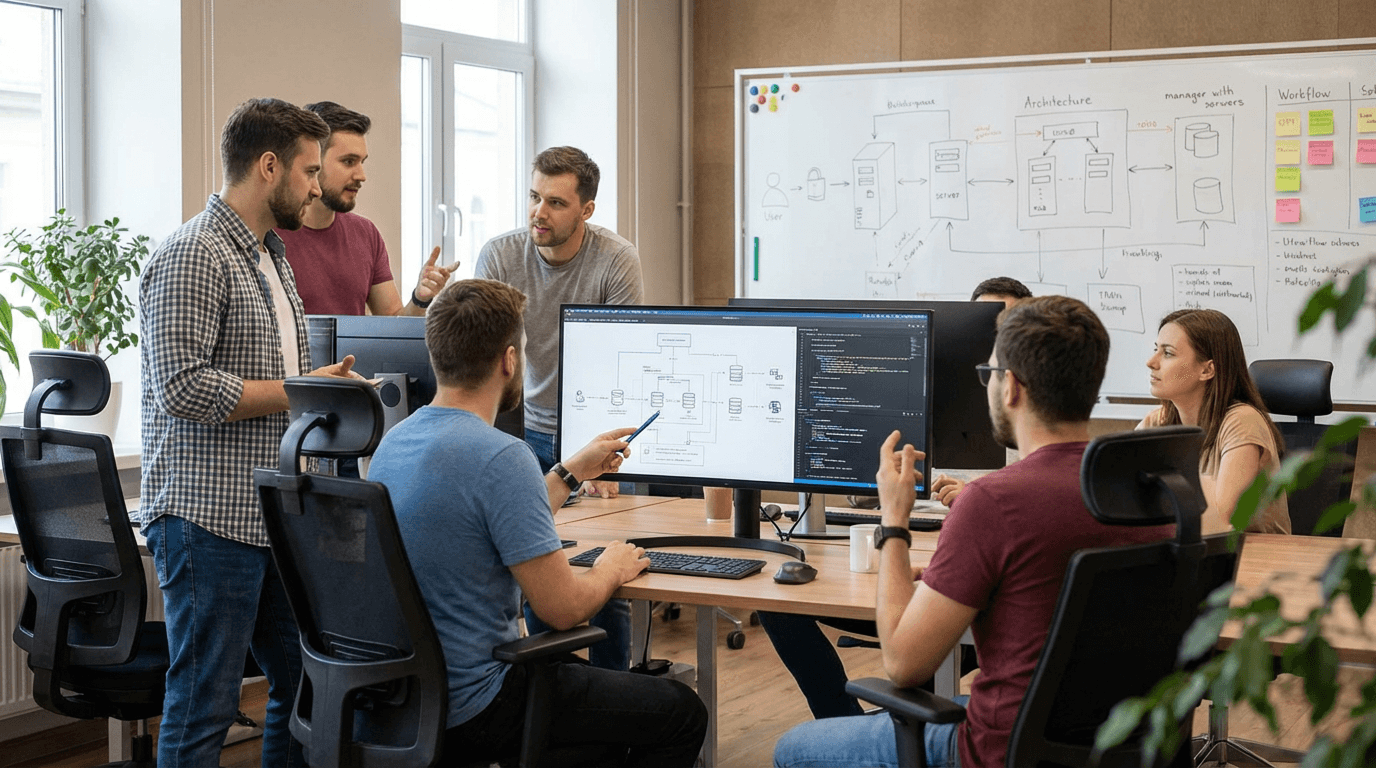

Integrating Technical and Organizational Governance Measures

Make AI agent governance policy enforceable in production. Layer technical controls, AI gateways, centralized audit trails, and role-appropriate access provisioning, with organizational practices such as a cross-functional governance team, regular upskilling, and explicit ownership mapping.

Addressing shadow AI requires more than technical controls: it requires a cultural shift where engineering teams understand that ungoverned agent deployments create enterprise-wide liability, not just local shortcuts. Governance policies must be visible, enforced at the pipeline level, and communicated as a team standard rather than a compliance obligation imposed from above.

Success looks like measurable reductions in policy violations, complete and queryable audit trails, and faster incident mitigation. Many enterprises operationalize this through a unified "AI gateway" or "audit layer" that centralizes enforcement across models, agents, and tools.

Machine-learning–powered agents often make decisions that humans can't fully interpret. Boards must navigate this transparency-versus-capability trade-off deliberately — because the stakes on both sides are real.

Where full explainability is non-negotiable

In certain contexts, explainability isn't a preference — it's a legal and ethical requirement. These include:

- Adverse actions in lending, insurance, or credit decisions

- Healthcare triage or clinical decision support

- Any agent decision that materially affects a customer's rights, access, or financial outcome

In these contexts, organizations should adopt:

- Explainable AI overlays — layered systems that translate agent reasoning into human-readable logic

- User-facing decision summaries — clear, plain-language explanations of why a decision was made

- Full provenance tracing — a complete record of the data, rules, and pathways that led to the outcome

The cost of getting it wrong

The consequences of under-investing in explainability extend well beyond internal failure. A major airline was held legally liable after its AI chatbot gave customers incorrect policy information. The case established a critical precedent: organizations cannot disclaim responsibility for agent outputs simply because the agent acted autonomously. If an agent makes decisions that affect customers or third parties, explainability is a legal obligation — not an engineering nice-to-have.

Where selective transparency is acceptable

Not every deployment requires the same level of explainability. In lower-risk, high-speed operational workflows, selective transparency can preserve performance without meaningfully increasing risk, provided it is paired with:

- Strong continuous monitoring

- Rollback capabilities for anomalous decisions

- Clear escalation paths when agent behavior deviates from expected parameters

The goal is not maximum transparency everywhere. It is the right level of transparency for the stakes involved.

Addressing Systemic Risks in Multi-Agent Ecosystems

Systemic risk arises from interactions among agents, their communications, coordination patterns, and shared tools, where one agent's failure cascades across the network. Research shows single-agent robustness is insufficient; resilience requires authenticated communication standards, isolation strategies, and cross-agent incident response. Emerging standards for secure inter-agent protocols and observability layers will be critical as multi-agent ecosystems grow in size and business impact.

Checklist for system-level hardening:

- Authenticated, signed messaging between agents and tools

- Sandboxing and least-privilege isolation for each agent capability

- Multi-agent observability with correlation IDs and cross-agent tracing

Legal and Regulatory Considerations for AI Agent Accountability

The regulatory landscape for AI agents has moved from voluntary guidance to a binding obligation. Executives can no longer treat compliance as a future consideration — three frameworks now define the enterprise baseline, and their deadlines are already in motion.

The three foundational frameworks

NIST AI Risk Management Framework (AI RMF): The NIST AI RMF provides actionable guidance for identifying AI risks, implementing controls, and maintaining continuous oversight. It is built around four core functions:

- Govern — establish policies, roles, and accountability structures

- Map — identify and categorize AI risks across the organization

- Measure — assess and analyze those risks with defined metrics

- Manage — prioritize and implement controls based on risk severity

ISO 42001 ISO 42001 is the international standard for AI management systems. It establishes requirements for developing, implementing, and maintaining governance frameworks that align with organizational objectives while managing AI-related risks systematically. Organizations seeking a globally recognized compliance posture should treat ISO 42001 as foundational infrastructure.

The EU AI Act The EU AI Act introduces legally binding obligations for high-risk AI systems, including mandatory conformity assessments, risk management systems, and post-market monitoring. Its phased implementation is already active:

- February 2025 — prohibited AI practices banned; AI literacy obligations begin

- August 2025 — GPAI model obligations take effect

- August 2026 — high-risk system requirements apply to the financial sector

- August 2027 — full applicability across all remaining high-risk categories

Beyond the three frameworks: additional legal exposure

Regulatory frameworks are not the only source of legal accountability. Executives should also account for:

- Principal–agent theory applied to AI — courts and regulators are increasingly applying delegated authority principles to AI systems, raising questions about traceability and responsibility allocation across model providers, platform operators, and deploying organizations

- Sectoral laws — HIPAA, GDPR, and data sovereignty regulations remain fully in force. Misaligned agent permissions or cross-border data flows can trigger violations entirely independently of AI-specific rules

- Third-party agent risk — organizations deploying externally built AI agents must ensure vendor contracts explicitly address data-use restrictions, AI-specific indemnities, and training data provenance. Deployer obligations under the EU AI Act apply regardless of whether the agent was built in-house or procured from a vendor

Practical Steps for Executives to Operationalize AI Agent Governance

A pragmatic, repeatable playbook accelerates AI governance maturity:

- Inventory all agents in production and high-fidelity pilots.

- Map each agent's access, autonomy, data sensitivity, and business impact.

- Flag high-risk, high-autonomy agents for enhanced controls.

- Mandate tamper-evident observability for all critical paths.

- Require independent safety reviews for high-impact agents.

- Establish incident protocols and named business ownership, and rehearse them.

- Define a decommissioning policy for every agent at deployment time — specifying credential revocation, data deletion, audit trail preservation, and ownership reassignment. A deprecated agent with live credentials is a security risk, not a solved problem.

Reduce Risk Before It Reaches Production

Explore the frameworks, controls, and monitoring strategies needed to manage governance and bias in live AI agents.

Talk to an Expert

Establishing a Cross-Functional AI Governance Council

Create an AI governance council spanning legal, security, operations, product/engineering, and business leadership. Its remit: review agent metrics, bias and audit reports, incident postmortems, and roadmap changes, ensuring governance maturity keeps pace with adoption.

A practical cadence is monthly incident review and quarterly bias audits, with KPIs tied to policy violation reduction, time-to-containment, and audit completeness. The council should also own regulatory tracking, maintaining current awareness of EU AI Act implementation milestones, NIST guidance updates, and sector-specific obligations as they take effect.

Developing Incident Response and Emergency Shutdown Protocols

Experts recommend emergency shutdown mechanisms for high-risk AI agents to prevent harm escalation. Define the incident lifecycle clearly: detection, isolation, containment, triage, recovery, and root cause analysis. Implement automated kill switches, manual overrides, and sandboxing to contain anomalous behavior, and rehearse scenarios such as prompt-injection defense, tool misuse, or data exfiltration attempts.

Leveraging AI Gateways and Unified Audit Layers

An AI gateway is a control plane between agents and enterprise systems that verifies actions, enforces policy, orchestrates human-in-the-loop checkpoints, and standardizes model and version control. A unified audit layer consolidates all agent prompts, tool calls, decisions, and outcomes into a searchable, tamper-evident system. Core capabilities to prioritize:

- Centralized policy enforcement (rate limits, PII redaction, action whitelists)

- Fine-grained access controls and token management for non-human identities

- Human approval workflows for high-impact actions

- End-to-end provenance and replay for investigations

- Real-time anomaly detection and automated containment hooks

How Folio3 AI Can Help With Managing Governance and Bias in Production AI Agents?

Folio3 AI embeds governance, bias controls, and accountability frameworks at every stage of your agent lifecycle, from strategy through continuous optimization, so you scale responsibly.

AI Agent Strategy & Roadmapping

Folio3 AI assesses your governance risk exposure and builds an AI adoption roadmap with compliance checkpoints, bias controls, and oversight mechanisms embedded from day one.

Custom AI Agent Development

Every agent is built with defined authority boundaries, bias-aware training data evaluation, and auditability by default, using AutoGen, LangChain, and CrewAI powered by leading LLMs.

AI Agent Integration

Folio3 AI eliminates shadow AI risks at the integration layer by enforcing scoped credentials, secure data exchange protocols, and access controls across all connected enterprise platforms.

Maintenance & Optimization

Folio3 AI's ongoing maintenance services ensure your agents remain high-performing, compliant, and aligned with evolving governance standards through continuous tuning, monitoring, and proactive risk management updates.

Human-AI Experience Design

Folio3 AI designs explain ability interfaces, user-facing decision summaries, and transparency indicators that keep human oversight meaningful and counter skill atrophy across your oversight teams.

Agent Training & Continuous Learning

Continuous fine-tuning, adversarial stress testing, and bias baseline reviews ensure agents improve over time in ways that strengthen governance posture rather than quietly undermining it.

Frequently Asked Questions

What distinguishes AI agents from traditional chatbots, and why does governance differ?

AI agents act autonomously, setting goals and executing multi-step tasks via tools and APIs, while chatbots primarily generate or retrieve responses to direct prompts. The governance difference is fundamental: traditional AI governance manages output risk, asking whether a response is correct and compliant. Agentic AI governance must also manage action risk, asking what the system is permitted to do and who is accountable when it acts without waiting for human confirmation.

How can organizations assign clear accountability for AI agent decisions?

Assign a named owner to each production AI agent, define documented escalation paths, and record responsibilities in a governance register so every agent action is traceable, auditable, and remediable. Governance councils should review ownership assignments regularly as agent capabilities expand, and decommissioning responsibilities should be defined at deployment time, not left undefined until an agent needs to be retired.

What are effective methods to detect and reduce bias in AI agents?

Audit training data and production outcomes for disparate impact, monitor continuously for bias drift using automated thresholds, and stress test agents against edge cases and diverse demographic scenarios. Establish quantitative bias baselines before go-live, set automated gating policies in the production pipeline, and commission periodic external reviews to validate internal methods.

How should executives incorporate AI agent governance into deployment workflows?

Bake governance into CI/CD pipelines with policy gates, observability by default, and decommissioning plans at the point of deployment. Commission external reviews for high-impact agents before go-live. Map each deployment against the applicable regulatory framework — NIST AI RMF, ISO 42001, or the EU AI Act, so compliance obligations are identified at launch rather than discovered during an audit.

What organizational changes support scalable and responsible AI agent management?

Stand up a dedicated AI governance council with cross-functional membership, establish formal roles such as AI Product Owners and Agent Supervisors, address shadow AI by making governance standards visible and enforceable at the team level, and invest in continuous upskilling to prevent skill atrophy in human oversight staff. Tie governance KPIs to measurable outcomes like policy violation rates and time-to-containment, not just compliance documentation.