The term “ETA” usually means “Estimated Time of Arrival” but in the technology realm it refers as “Estimated Completion Time” of a computational process in general. In particular, this problem is specific to estimating completion time of a batch of long scripts running parallel to each other.

Problem

A number of campaigns run together in parallel, to process data and prepare some lists. Running time for each campaign varies from 10 minutes to may be 10 hours or more, depending on the data. A batch of campaigns is considered as complete when execution of all campaigns is finished and then resorted to have mutually exclusive data.

What we will do is to provide a solution that can accurately estimate completion time of campaigns based on some past data.

Data

We have very limited data available; per campaign, from the past executions of these campaigns:

Feature Engineering / Preprocessing

These are the campaigns for which past data is available. A batch can consist of one or many campaigns from the above list. The feature uses_recommendations resulted during feature engineering. This feature helps machine differentiate between the campaigns which are dependent on an over the network API and the ones which are not, so that machine can keep a variable which caters network lag implicitly.

Is this a Time Series Forecasting problem?

It could have been, but from the analysis it can be observed that the time of the year doesn’t impact the data that much. So this problem can be tackled as a regression problem instead of time series forecasting problem.

How it turned into a regression problem?

The absolute difference in seconds between start time and end time of a campaign will be a numeric variable, that can be made the target variable. This is what we are going to estimate using Regression.

Regression

Our input X is the data available, and output y is the time difference between start time and the end time. Now let’s import the dataset and start processing.

> import pandas as pd

dataset = pd.read_csv(‘batch_data.csv’)

As it can be seen from our data that there are no missing entries as of now, but as this may be an automated process, so we better handle the NA values. The following command will fill the NA values with column mean.

> dataset = dataset.fillna(dataset.mean())

The output y is the difference between start time and end time of the campaign. Let’s set up our output variable.

> start = pd.to_datetime(dataset[‘start_time’]) > process_end = pd.to_datetime(dataset[‘end_time’]) > y = (process_end – start).dt.seconds

y is taken out from the dataset, and we won’t be needing start_time and end_time columns in the X.

> X = dataset.drop([‘start_time’, ‘end_time’], 1)

You might have questioned that how would Machine differentiate between the campaign_ids, here in particular, or any such categorical data in general.

A quick recall if you already know the concept of One-Hot-Encoding. It is a method to create a toggle variable for categorical instances of data. So that, a variable is 1 for the rows where data belongs to that categorical value, and all other variables are 0.

If you don’t already know the One-Hot-Encoding concept, it is highly recommended to read more about it online and come back to continue. We’ll use OneHotEncoder from sklearn library.

> from sklearn.preprocessing import OneHotEncoder > onehotencoder = OneHotEncoder(categorical_features = [0], handle_unknown = ‘ignore’) > X = onehotencoder.fit_transform(X).toarray()

> start = pd.to_datetime(dataset[‘start_time’]) > # Avoiding the Dummy Variable Trap is part of one hot encoding > X = X[:, 1:]

Now that input data is ready, one final thing to do is to separate out some data to later test how good our algorithm is performing. We are separating out 20% data at random.

> # Splitting the dataset into the Training set and Test set > from sklearn.cross_validation import train_test_split

> X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2)

After trying Linear regression, SVR (Support Vector Regressor) and XGBoosts and RandomForests on the data it turned out that Linear and SVR models doesn’t fit the data well. And the other finding was that the performance of XGBoost and RandomForest was close to each other for this data. With a slight difference, let’s move forward with RandomForest.

> # Fitting Regression to the dataset > from sklearn.ensemble import RandomForestRegressor

> regressor = RandomForestRegressor(n_estimators=300, random_state=1, criterion=’mae’) > regressor.fit(X_train, y_train)

The regression has been performed and a regressor has been fitted on our data. What follows next is checking how good is the fit.

Performance Measure

We’ll use Root Mean Square Error (RMSE) as our unit of goodness. The lesser the RMSE, the better the regression.

Let’s ask our regressor to make predictions on our Training data, that is 80% of the total data we had. This will give a glimpse of training accuracy. Later we’ll make predictions on the Test data, the remaining 20%, which will tell us about the performance of this regressor on Unseen data.

If the performance on training data is very good, and the performance on unseen data is poor, then our model is Overfitting. So, ideally the performance on unseen data should be close to that on the training data.

> from sklearn.metrics import mean_squared_error > from math import sqrt > training_predictions = regressor.predict(X_train)

> training_mse = mean_squared_error(y_train, training_predictions) > training_rmse = sqrt(training_mse) / 60 # Divide by 60 to turn it into minutes

We got the training RMSE, you should print it and see how many minutes does it deviate on average from the actual.

Now, let’s get the test RMSE.

> test_predictions = regressor.predict(X_test) > test_mse = mean_squared_error(y_test_pred, test_predictions)

> test_rmse = sqrt(test_mse) / 60

Compare the test_rmse with training_rmse to see how good is the regression performing on seen and unseen data.

What’s next for you is to now try fitting XGBoost, SVR and any other Regression models that you think should fit well on this data and see how different is the performance of different models.

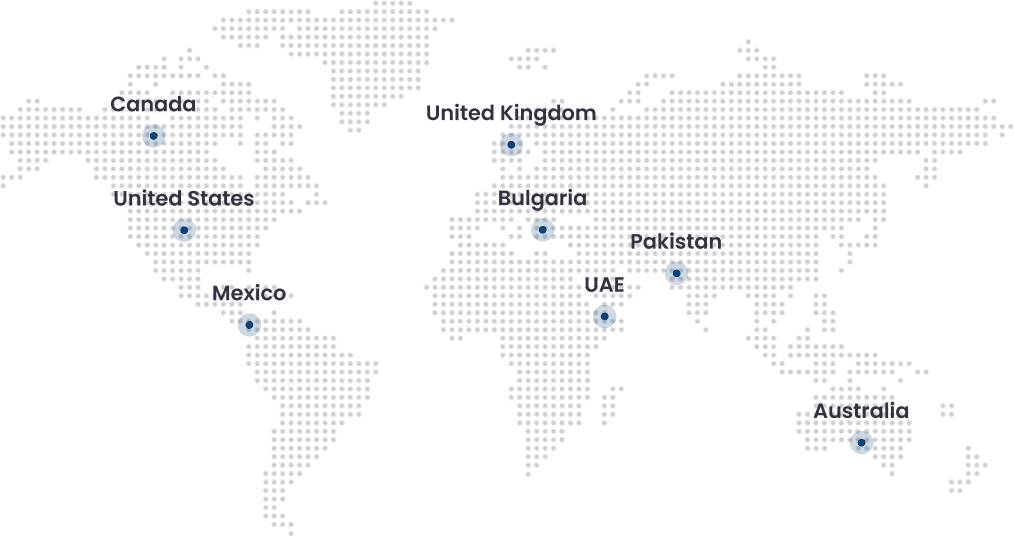

Connect with us for more information at Contact@folio3.ai