AI has refined almost every industry, from healthcare to marketing. There is no sector in which its advantages are not used. One area where it’s excelling is AI in human emotion detection.

The use of this technology is expected to skyrocket in the coming years, from $19.5 billion in 2020 to $37.1 billion in 2026. It highlights the high demand for this technology.

By integrating this technology into systems, companies can make their robots interact more naturally. This blog explores how emotion recognition works and its practical uses in different fields.

What Is AI Emotion Detection?

The most challenging part is comprehending emotions because they’re mixed feelings and often complex to decipher. In traditional methods, companies used to physically observe their client behavior, which can be subjective and limited in accuracy.

That’s why AI algorithms of analysis for human emotions were created to help IoT, robots, and other devices understand and even simulate the emotional aspects of humans. But what exactly does AI in emotion entail?

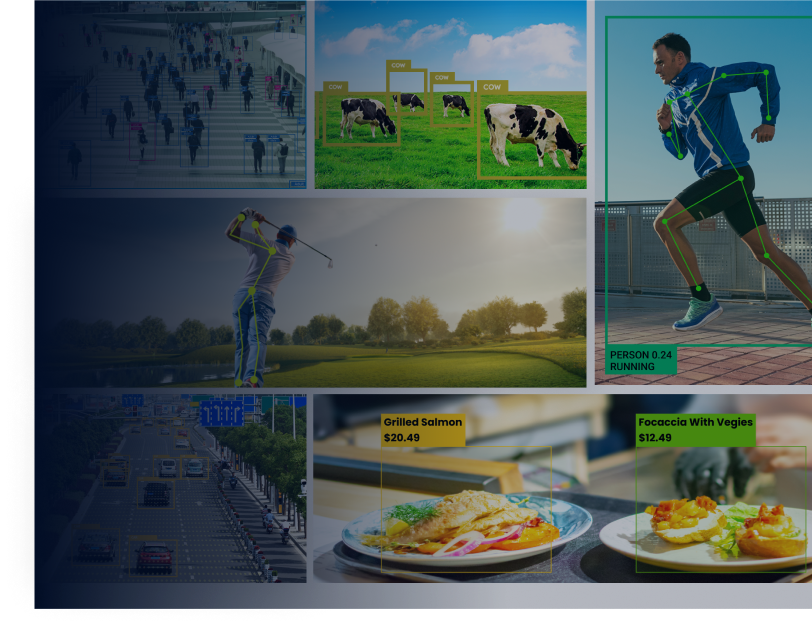

Affective computing, also known as AI emotion detection, is the ability to give machines authority to understand human feelings. It’s one of the most adapted and exhilarating parts of AI.

Just picture a computer that could tell how you’re feeling by looking at your face, hearing your voice, or noticing how you move. That’s what this field is all about—teaching computers to recognize and make sense of our emotions using all these little cues we give off without even realizing it.

- Facial expressions – AI algorithms learn from vast datasets of faces displaying various emotions like happiness, sadness, anger, and surprise. They scrutinize facial features such as mouth, eyes, and eyebrows to identify corresponding emotions.

- Body language – Physical gestures, posture, and subtle movements also provide clues about emotional states. AI systems analyze these nonverbal cues to enhance their understanding of emotions.

- Voice tone – Speech patterns, including pitch, volume, and intonation, can convey emotions effectively. AI algorithms decode these vocal elements to deduce emotional states during real-time conversations.

How do Emotion Recognition AI Algorithms Work?

Understanding emotions through technology isn’t just about knowing how we feel. It’s about diving into our reactions and moods, whether happy, sad, angry, or surprised. Think of it like reading our facial expressions.

When all those little muscle movements line up, the technology compares them to an extensive database to figure out exactly how we feel.

Emotion recognition technology isn’t just for individuals; it’s also a significant asset for large corporations. For example, Google has integrated emotion recognition software into its Cloud Video Intelligence API.

This integration allows Google to enhance the user experience across its various services and platforms. If a user appears frustrated, Google might offer calming content to help alleviate their frustration.

Conversely, if someone seems joyful, they might receive suggestions for exciting news or content to match their mood.

Even creators and marketers can be aware of how audiences react to videos. Understanding these emotional responses helps them create more engaging content. Here’s a breakdown of all the steps your business can perform to get AI emotion recognition:

Step 1 – Data Acquisition and Labeling

- Gather images, videos, or audio recordings showcasing various emotions, either in controlled settings or real-world scenarios.

- Manually assign each data point with the corresponding emotion (e.g., happy, sad, angry) with the help of human annotators.

Step 2 – Preprocessing

- Standardize the size and format of the data for efficient processing.

- Adjust data to a specific range to enhance training efficiency.

- Eliminate unwanted background noise or distortions from the data.

Step 3 – Feature Extraction

- Detect and monitor crucial facial features such as eyebrow movements, lip corners, and wrinkle patterns.

- Conduct voice analysis, considering features like pitch, tone, and speaking rate for audio data.

Step 4 – Model Training

- Utilize machine learning algorithms (e.g., SVM, CNN) to train on preprocessed data and extracted features.

- During training, the algorithm learns to associate specific feature combinations with corresponding emotions.

Step 5 – Emotion Classification

- Analyze new, unseen data (images, videos, or audio recordings) using the trained model.

- Predict the most likely emotion based on the extracted features from the new data.

Step 6 – Evaluation and Refinement

- Continuously evaluate the algorithm’s performance on a separate testing dataset to assess accuracy and identify areas for improvement.

- Refine the algorithm based on evaluation results, incorporating additional data or adjusting the training process as needed.

AI Practical Applications in Human Emotion Detection

This technology, while not flawless, shows promise for diverse applications across various fields. Here’s a breakdown of its hypothetical use cases in specific areas:

1. Marketing Insights

Companies use AI in emotion detection to be conscious of customer interactions (calls, chats, reviews) and respond in a way that validates clients’ emotional sentiments. It helps improve customer service and builds marketing campaigns based on emotional responses.

Moreover, emotion detection is employed in A/B testing marketing materials or product designs to determine which elicits a more robust customer emotional response.

Example: Microsoft Azure uses emotion recognition to interpret customer responses to advertisements. It helps companies understand the emotional impact and effectiveness of their marketing campaigns.

2. Mental Health Awareness

Human emotion detection is also valuable for identifying speech patterns or facial expressions that may indicate depression, anxiety, or other mental health issues. This capability allows for early detection and intervention, potentially leading to improved mental well-being for individuals.

Example: Beyond Verbal analyzes speech patterns to detect potential signs of anxiety or depression. It facilitates early intervention within mental healthcare.

3. Human-Computer Interaction (HCI)

This technology also serves the purpose of improving HCI by making it more natural and empathetic. With emotion detection, machines can use these responses and reactions related to analyzed emotions.

Example: Affectiva’s emotion AI technology enables computers to understand human emotions through facial expressions and tone of voice. It has been integrated into various applications, including virtual assistants, education software, and gaming systems.

4. Law Enforcement & Security (Ethical Concerns Exist)

AI in human emotion detection has also been explored in these sectors. It identifies inherent threats or criminal behavior based on emotional responses captured through video surveillance cameras.

Example: The Department of Homeland Security is experimenting with this technology to detect discomfort, anger, or hostility in airport travelers.

5. Entertainment & Gaming

This technology has its way into the entertainment industry, specifically in gaming. By integrating emotion recognition technology, game developers can create more immersive and personalized experiences for players.

Example: FaceRig uses facial recognition technology to control in-game avatars by sensing players’ facial expressions, creating a more immersive gaming experience.

6. Medical Diagnostics (Early-Stage Research)

Although it’s still in the early stages of research, emotion detection is being looked into for conceivable use in medical diagnosis. It could help with diagnosing autism spectrum disorder (ASD) or other conditions where emotional expression plays a role.

By studying facial expressions and tone of voice, AI can spot patterns that suggest certain conditions, offering a new way to assist doctors in their assessments.

Example: Researchers at the University of Southern California are studying the use of emotion recognition technology in diagnosing ASD in children. They are developing a computer program that analyzes facial expressions to detect potential indicators of ASD.

7. Education

This technology is ideal for assessing student engagement during online lectures or presentations for any learning platform. AI in emotion detection helps tutors adapt to students and tailor their approach according to emotional responses.

Example: Eedi leverages AI to analyze student emotions and adjust learning materials according to their responses, creating a more personalized learning experience.

Conclusion

The way AI is improving at recognizing human feelings is a big deal for the industry. It’s backed by the numbers from Fortune Business Insights, which estimates that the emotion recognition market could hit $74.80 billion by 2029.

It’s not just the market that makes it an attractive proposition. The potential to improve a variety of sectors makes it a desirable technology. From marketing and mental health to gaming and education, emotion detection has a promising future in improving the human experience.

Frequently Asked Questions (FAQs)

Q1: Is AI Emotion Detection Consistently Accurate?

Answer: It’s hard to say for sure. AI has made some significant advancements, but it’s not always accurate. There can still be instances of misinterpretation. However, continuous learning and improved algorithms steadily enhance its accuracy over time.

Q2: How Is AI Emotion Detection Changing Healthcare?

Answer: It is changing healthcare by spotting mental health problems early. It looks at how people express emotions, helping doctors act sooner to improve mental health.

Q3: Can AI Understand Cultural Differences in Emotional Expression?

Answer: AI is improving in grasping cultural differences in emotional expressions, yet faces challenges due to the diversity and complexity of cultural norms.

Q4: Are There Ethical Concerns With AI Emotion Detection?

Answer: Ethical concerns involve consent, privacy, and misuse of emotional data that must have been collected by AI illegally.

Q5: What Does the Future Hold for AI in Human Emotion Detection?

Answer: The future of AI in human emotion detection looks bright. It offers opportunities for AI-driven educational tools, improved human-computer interaction, and enhanced emotional well-being support.

Dawood is a digital marketing pro and AI/ML enthusiast. His blogs on Folio3 AI are a blend of marketing and tech brilliance. Dawood’s knack for making AI engaging for users sets his content apart, offering a unique and insightful take on the dynamic intersection of marketing and cutting-edge technology.