Ever wonder how machines identify and label images?

As humans, we do not make much effort to understand an image.

Try it yourself: take a random picture and ask yourself what things are present in the image. In the blink of an eye, you would say, for example, an animal (if not exactly which animal.)

Machines mimic this human ability of image classification.

But, unlike us, they put in a lot of effort to learn to do it. It takes millions of sample data (images) to train on and learn to recognize objects and distinguish them.

The datasets used for training machines are known as image classification models.

Image classification models are the real data source, and the machine’s ability to classify images is largely reliant on these models.

To find out what these models look like, we’ll go over the basics of image classification and briefly discuss 3 pre-trained classification models.

Explaining Image Classification

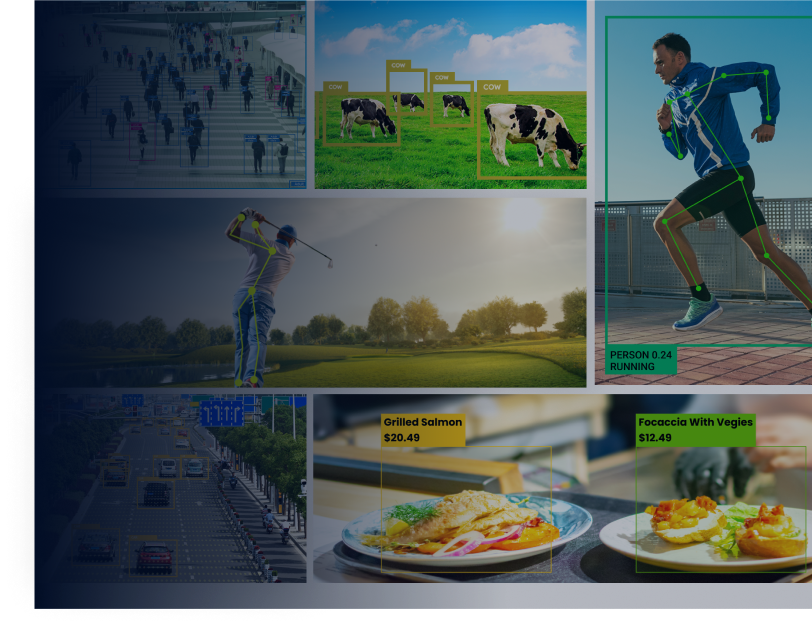

Image classification is an application of artificial intelligence and deep learning. It is the task of labeling images based on their characteristics and features.

Using deep learning algorithms, image classification applications identify similar features in images, distinguish objects, and assign labels to objects present in an image.

The applications need models trained on datasets to perform labeling on images, which we call image classification models.

Image Classification Models

Image classification models contain millions of compiled labeled example photos which they use as samples to apply to images taken as input and provide results.

To build an image classification model, we need to collect great amounts of example datasets and tune the model to recognize the features of each object precisely.

If the models turn out to be well-optimized, machines can give us quality results, but with inaccurately trained models, accuracy suffers, which makes building a robust model quite challenging.

Luckily, we do not need to take on this challenge and start from scratch.

We can use pre-trained models for any classification task. Using pre-trained models is a highly effective approach. It saves both the cost and effort of building a new model.

The best part of opting for pre-trained models is that they are vetted; thus, we do not have to worry about their quality.

Below, we cover 3 such pre-trained models which are widely used across the industry.

3 Pre-trained Image Classification Models

- EfficientNet

Tan and Le, 2019 first proposed the idea of EfficientNet. EfficientNet is one of the most efficient models with high levels of accuracy.

EfficientNet helps us form features of images and pass them to a classifier. This makes EfficientNet the backbone of many classification tasks.

EfficientNet provides a class of models, from B1 to B7, based on B0 as the baseline model. The course presents different accuracy and efficiency levels at various scales.

While the EfficientNet-B0 variant of the model contains 237 layers, EfficientNet-B7 has a total of 813 layers. They obtain an incredible level of performance on the CIFAR-100 and ImageNet datasets.

EfficientNet models work better for complex tasks and are more efficient than competitors.

- VGG-16

VGG-16 is a popular image classification model developed at the University of Oxford.

Though it rolled out at the famous ILSVRC 2014 Conference, the model remains unbeatable today.

Back then, VGG-16 made it to the top of the standard of AlexNet and won the classification challenge at an accuracy level of 92.7 percent.

In response, the researchers and the industry were fascinated by the model and quickly adopted it for image classification tasks.

VGG-16 is a 16 layers-deep convolutional network net (CNN) architecture. Its pre-trained model has learned rich feature representations of over a million images.

It contains 1000 object categories such as a mouse, keyboard, pencil, or several animals to classify images. The network takes image input sizes 224×224 and converts them into features.

- ResNet50

The 50 layers-deep convolutional network, ResNet50, is a powerful model for various image classification tasks.

1000s of images used for preparing the model are taken from the ImageNet database. The model is based on more than 23 million parameters, making it better for image classification.

The model involves skipping connection, directly combining the previous layer’s input with another layer’s output. Thus, it beats the limitation of VGG-16 by solving the issue of diminishing gradient, which makes it hard to train models.

ResNet50 can perform recognition tasks with fewer error rates.

Doing amazing things with visual data does not always need to be difficult.

Conclusion

We have a variety of computer vision tasks such as instance segmentation, object detection, image processing, and image classification that help us derive insights from image data and make recommendations for real-life scenarios.

We do not need to collect huge datasets and build models from scratch to perform these tasks.

We can use models like ResNet50, VGG-16, or EfficientNet, which are already trained on huge datasets and perform classification at different accuracy levels.

With these pre-trained models, we can effectively perform any computer vision task with various accuracy levels and scales and save the cost of building new deep learning algorithms.

Dawood is a digital marketing pro and AI/ML enthusiast. His blogs on Folio3 AI are a blend of marketing and tech brilliance. Dawood’s knack for making AI engaging for users sets his content apart, offering a unique and insightful take on the dynamic intersection of marketing and cutting-edge technology.